Following up from my earlier blogs on training and using TensorFlow models on the edge in Python, in this eighth blog post in this series, I’ll be talking about how to train a multi-label image classification model that can be used with TensorFlow.

Series Pit Stops

- Training a TensorFlow Lite Image Classification model using AutoML Vision Edge

- Creating a TensorFlow Lite Object Detection Model using Google Cloud AutoML

- Using Google Cloud AutoML Edge Image Classification Models in Python

- Using Google Cloud AutoML Edge Object Detection Models in Python

- Running TensorFlow Lite Image Classification Models in Python

- Running TensorFlow Lite Object Detection Models in Python

- Optimizing the performance of TensorFlow models for the edge

- Training a Multi-Label Image classification model with Google Cloud AutoML (You are here)

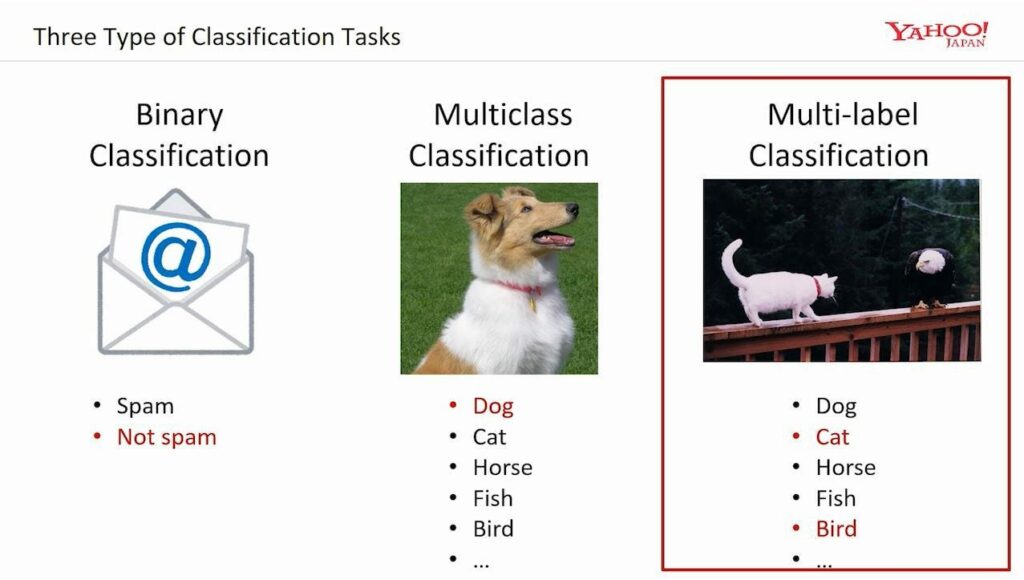

Unlike the image classification model that we trained previously; multi-label image classification allows us to set more than one label to an image:

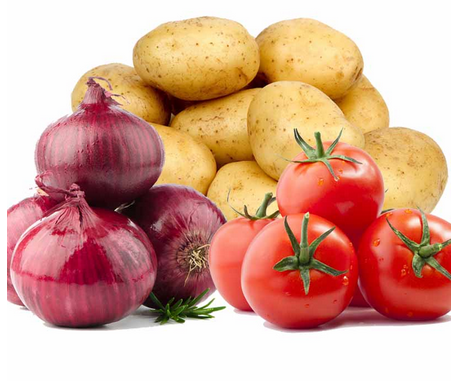

A very powerful use case for this type of model could be in a recipe suggestion app that lets you take an image of grocery items that you have and then suggests a recipe based on the items it recognizes and labels.

Consider the image above. With single-label classification, our model could only detect the presence of a single class in the image (i.e. tomato or potato or onion), but with multi-label classification; the model can detect the presence of more than one class in a given image (i.e. tomato, potato, and onion)

This is just one small example of how multi-label classification can help us— but certainly, you can think of many more examples.

While training such models usually requires a powerful machine and a background knowledge of ML frameworks, using AutoML eliminates the need for this. You can quickly offload the training process to Google‘s servers and then export the trained edge flavor of the model as a tflite file to run on your Android/iOS apps, or as a pb file to run in a Python environment.

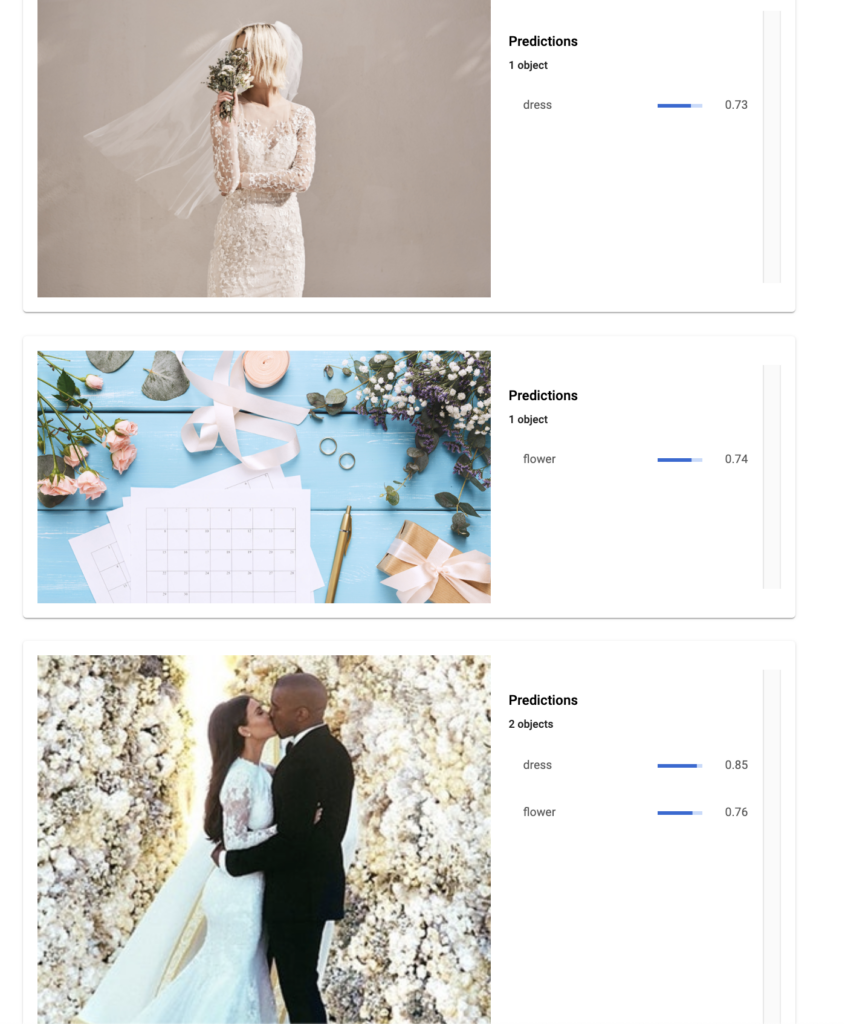

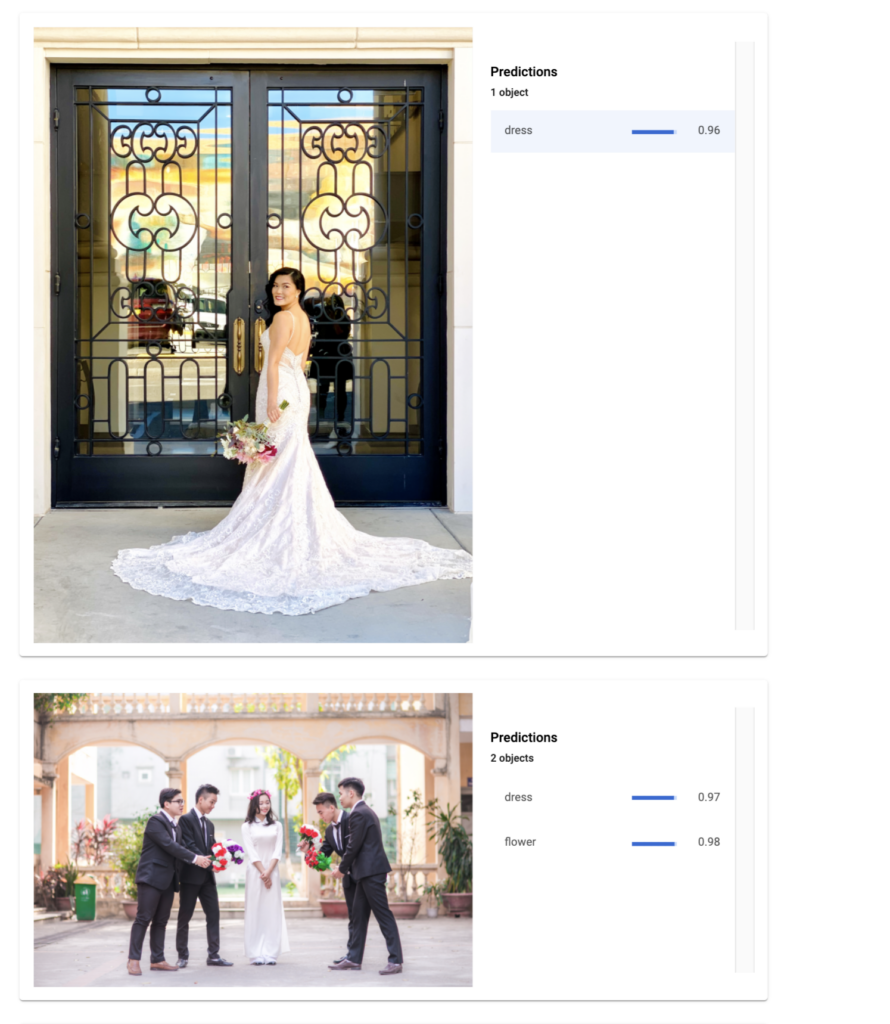

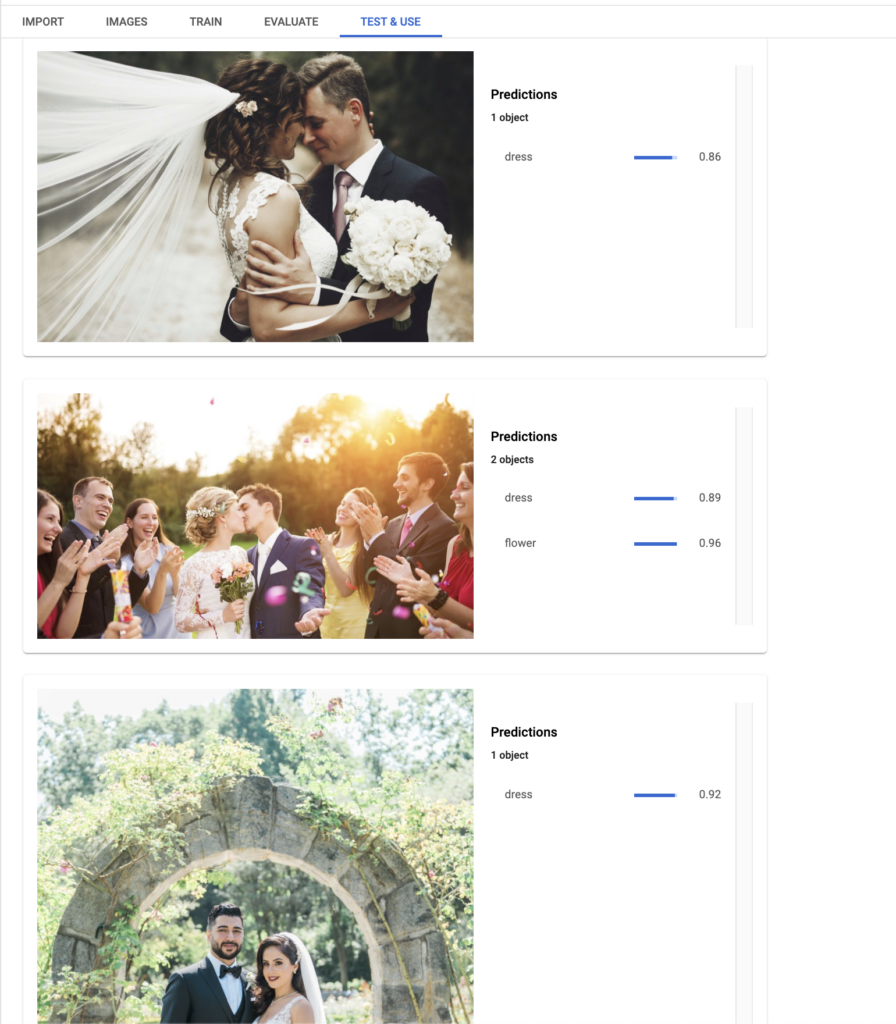

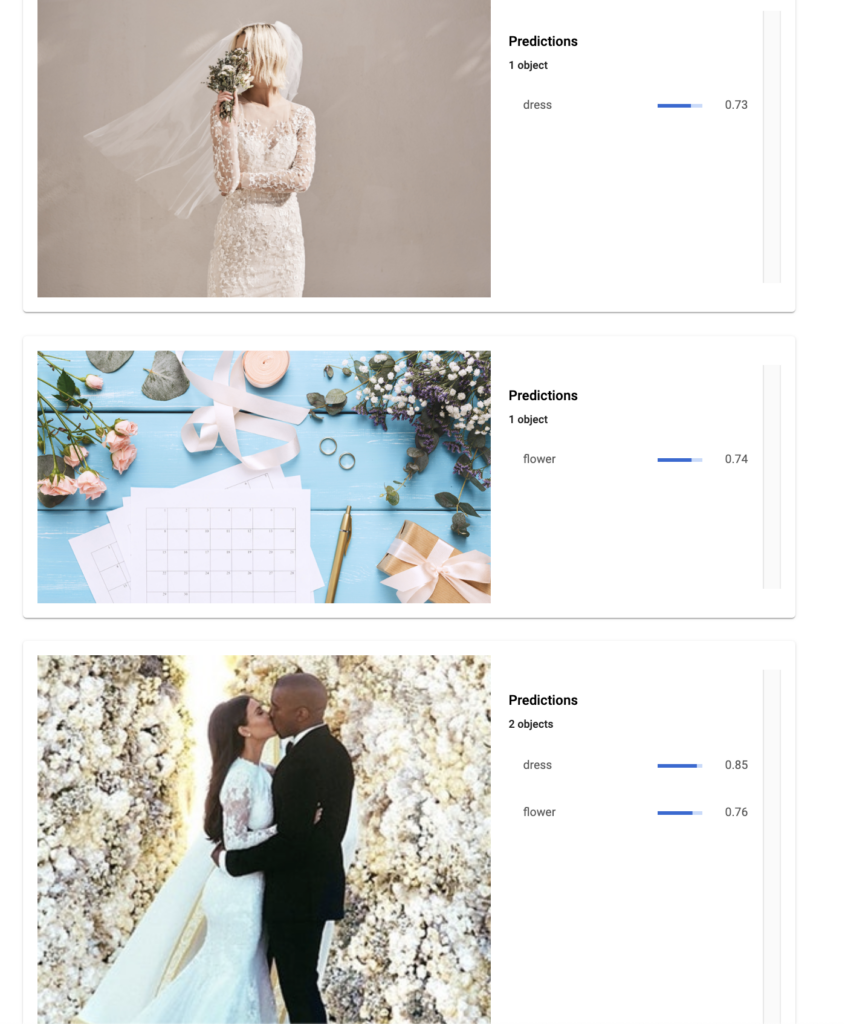

I recently used this product to train a multi-label model to identify both wedding dresses and flowers in an image, and here are some results:

Let’s now explore how you can train a similar model of your own in less than 30 minutes 🙂

Step 1: Create a Google Cloud Project

Go to https://console.cloud.google.com and log in with your account or sign up if you haven’t already.

All your Firebase projects use parts of Google Cloud as a backend, so you might see some existing projects in your Firebase Console. Either select one of those projects that are not being used in production, or create a new GCP project.

Step 2: Create a new dataset for multi-class image labeling

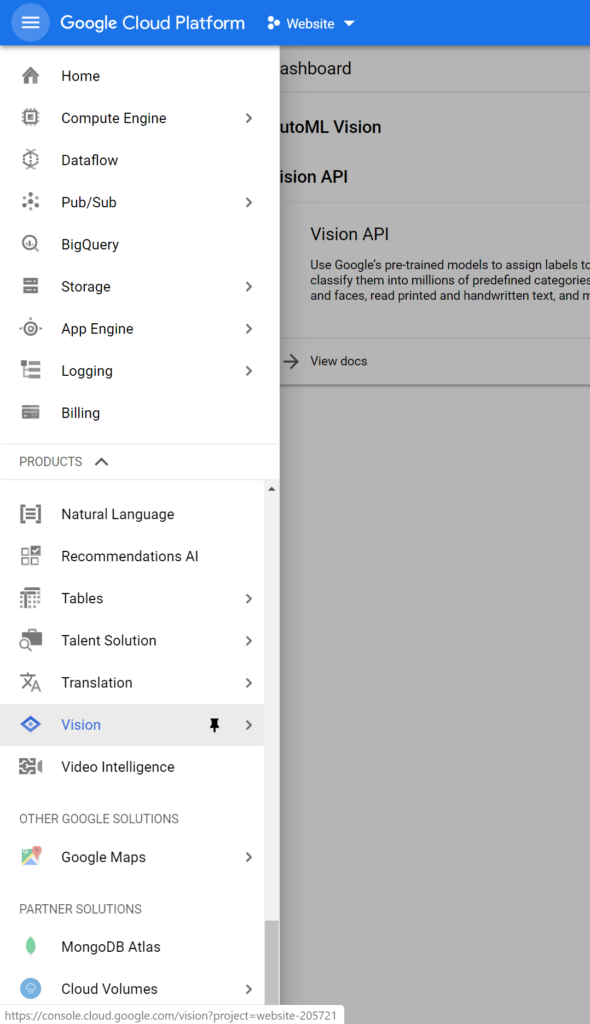

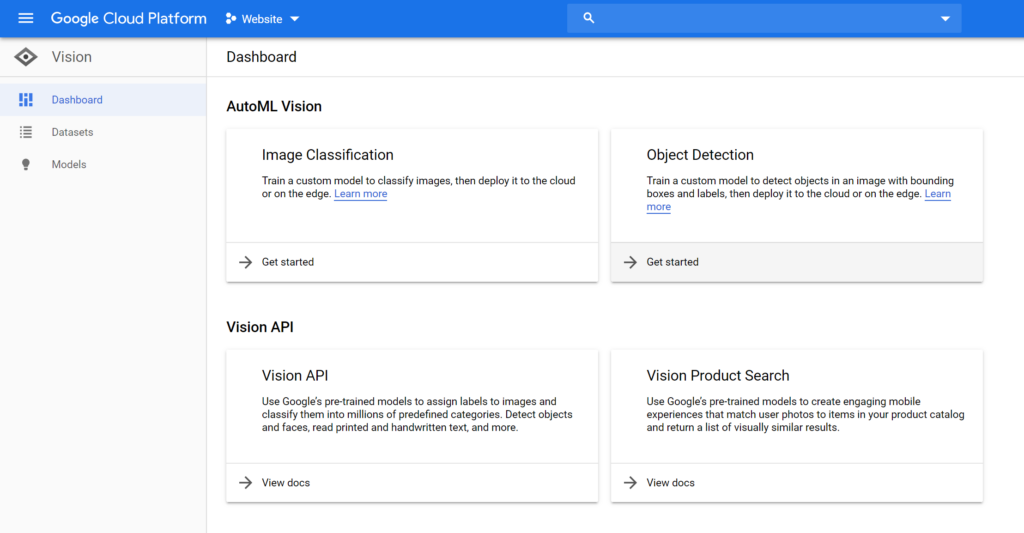

Once in the console, open the sidebar on the right and navigate to the very bottom till you find the Vision tab. Click on that.

Once here, click on the Get Started button displayed on the Image Classification card. Make sure that your intended project is being displayed on the top dropdown box:

You might be prompted to enable the AutoML Vision APIs. You can do so by clicking the Enable API button displayed on the page.

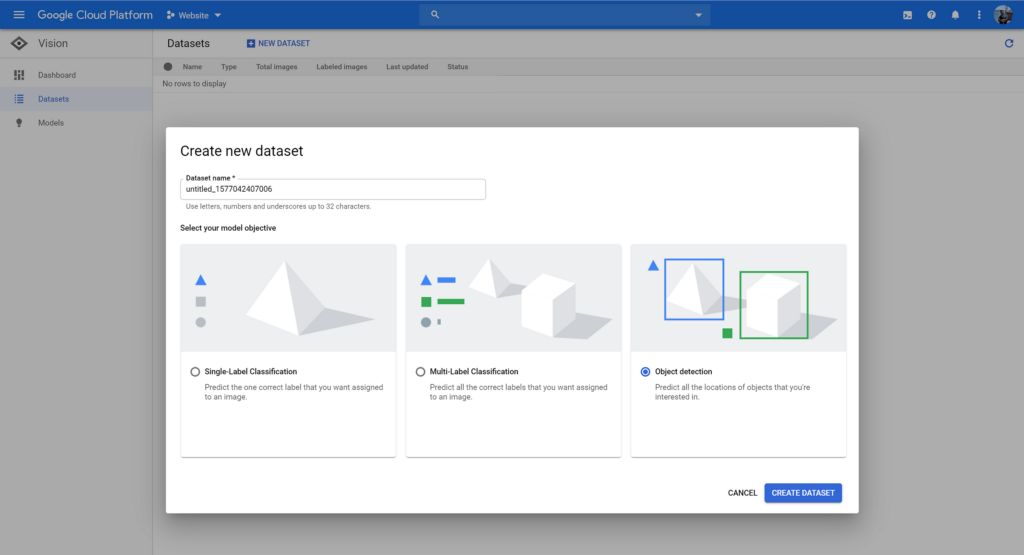

Lastly, click on the New Dataset button, give it a proper name, and then select Multi-Label Classification in the model objectives.

Step 3: Preparing the Dataset of images

When it comes to preparing a good dataset, I’ve talked about how to do so at length in one of my earlier blog posts. You can read it here if you’d like:

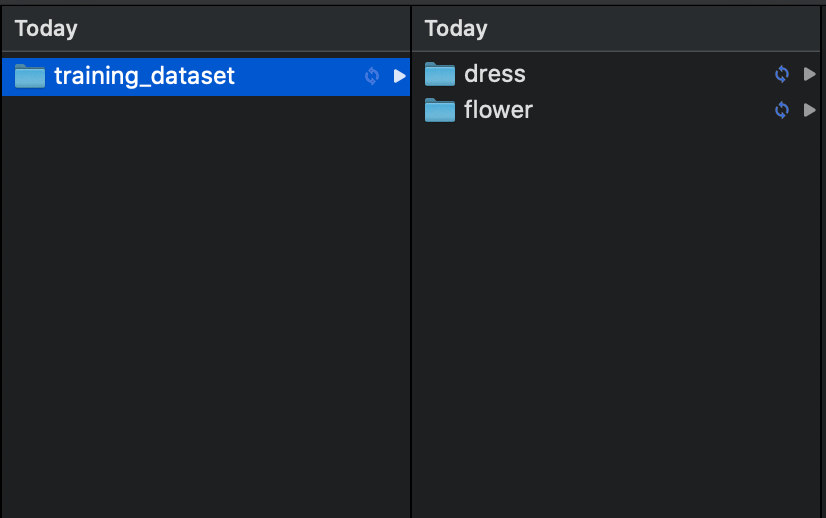

But when it comes to the current post, we essentially need all our images to be placed in different folders based on the labels that have been assigned to them.

For example, an image that contains a wedding dress will be placed in a folder called dress, whereas an image containing flowers will be placed in a folder called flowers. Images that contain both of these things will be placed in both these folders.

Here’s what the end result will look like:

Once done, zip the folder containing these two folders.

Step 4: Importing images for training

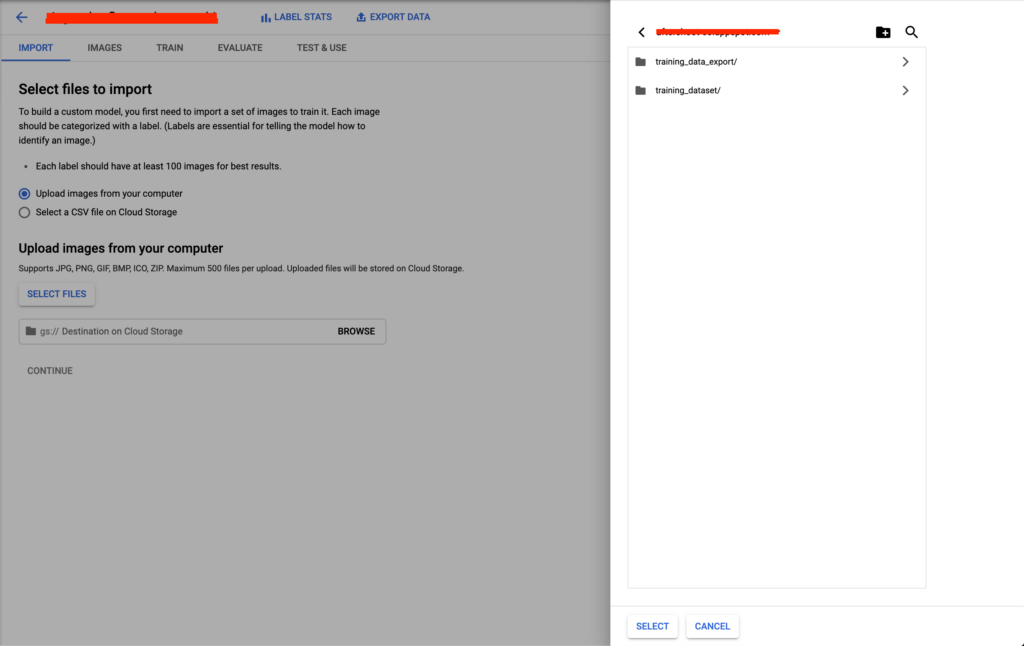

Once the dataset of training images is prepared, it’s time for us to head back to our Google Cloud Console and navigate back to the dataset we created earlier. Here, we’ll be asked to upload the initial training set, along with the location of the Cloud Storage Bucket used to store this dataset.

Click on Select Images and choose the zip we just created above. After this, click on the Browse button next to the GCS path and select the folder named your-project-name.appspot.com:

Once done, press Continue and wait for the import process to complete.

Step 5: Training the model

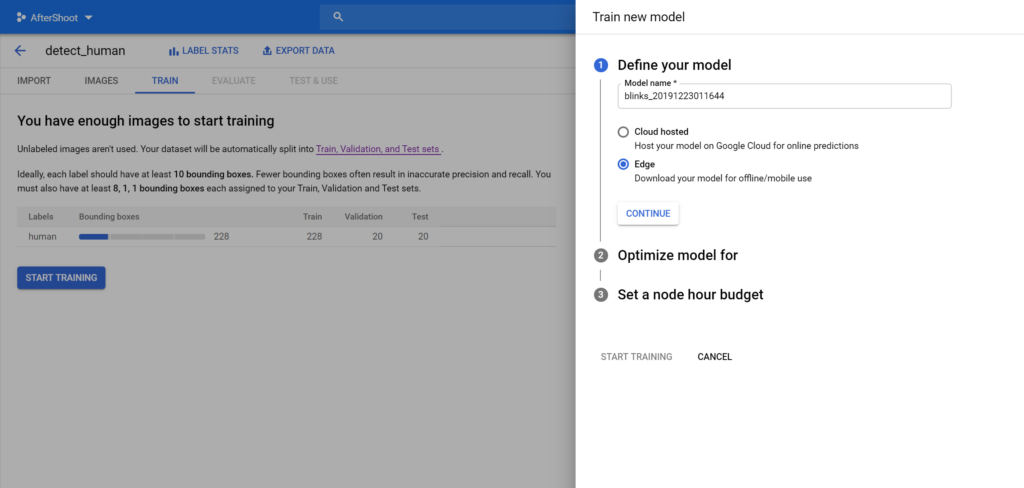

Once the import process completes, it’s time to train the model. To do this, head over to the Train tab and click the button that says Start Training.

You’ll be prompted with a few choices once you click on this button. Make sure that you select Edge in the first choice as opposed to Cloud-Based if you want tflite models that you can run locally.

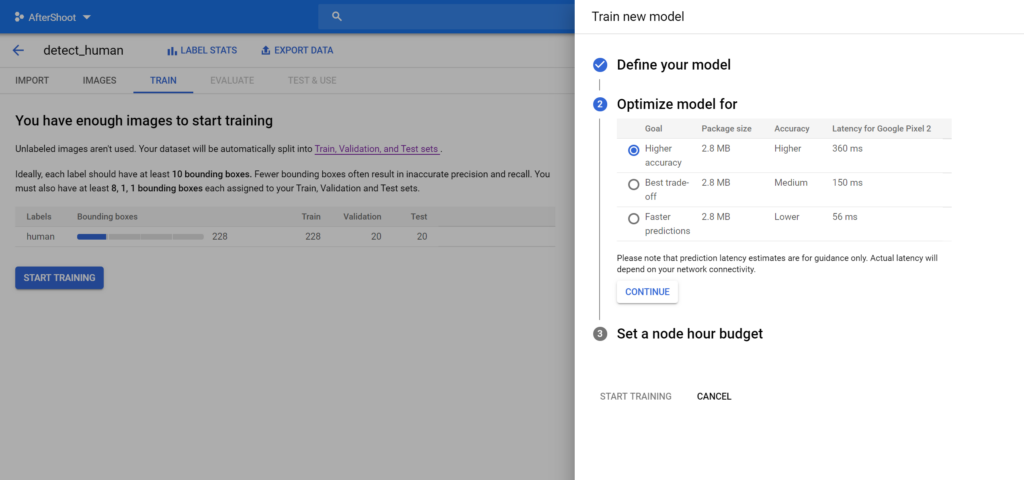

Press Continue and choose how accurate you need the model to be. Note that more accuracy means a slower model, and vice versa:

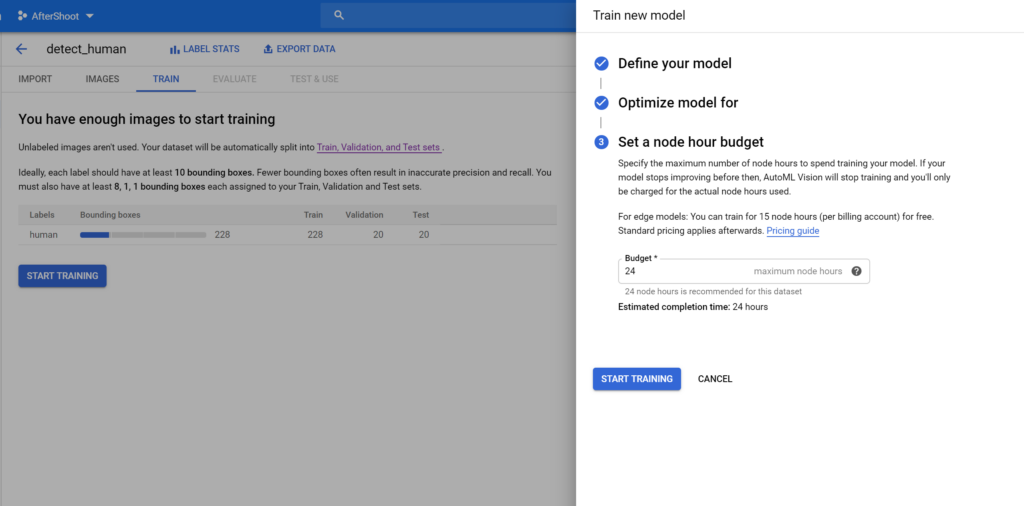

Lastly, select your preferred budget and begin training. For edge-based models, the first 15 hours of training is free of cost:

Once model training has started, sit back and relax! You’ll get an email once your model has been trained.

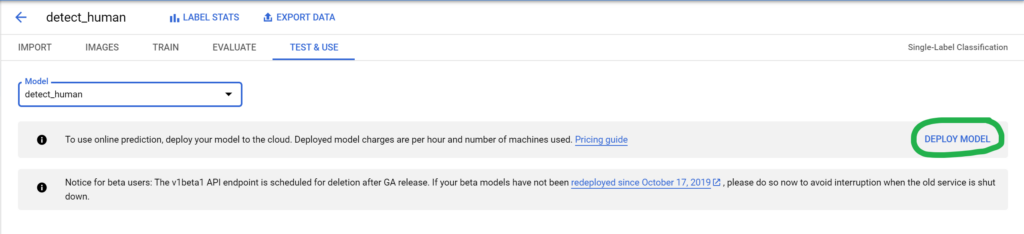

Step 6: Deploying and testing the trained model

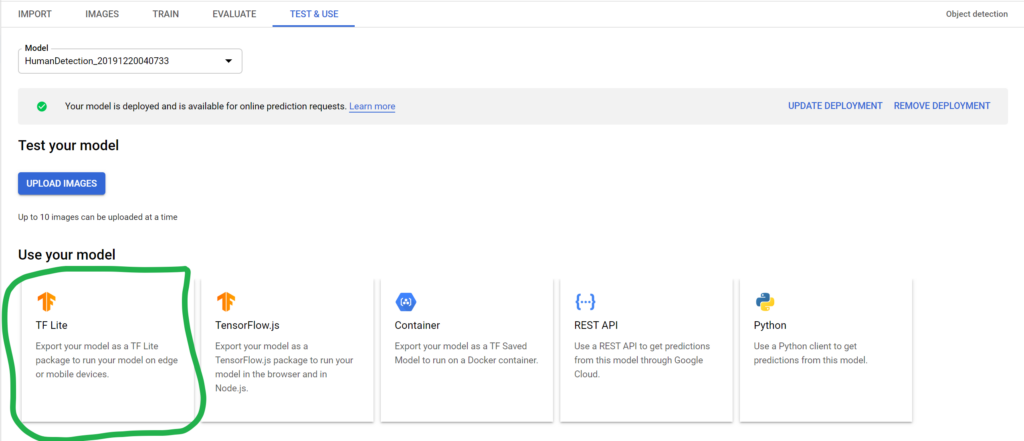

Once the model has been trained, you can proceed to the Test & Use tab and deploy the trained model.

Once the model has been deployed, you can upload images to the cloud console and test the model’s accuracy.

You can also download the tflite file on your local system and load it into your app to implement the same functionality there:

And that’s it! You can add more images to your dataset, annotate them, and retrain the model if you want a more accurate model.

I’ll be writing another blog post soon on how to use the obtained tflite model to classify images in an Android app and a Python environment—so keep an eye out for that!

Thanks for reading! If you enjoyed this story, please click the 👏 button and share it to help others find it! Feel free to leave a comment 💬 below.

Have feedback? Let’s connect on Twitter.

Comments 0 Responses