One week into my first year physics course at the University of Michigan, a professor assigned a problem set that required simulating some many-body system. It was due Friday. That was the week I learned my first programming language, Matlab.

This is how I’ve picked up bits and pieces of a dozen or so languages over the past decade. Besides an introductory CS class taught with C++ and a Java-based database class in graduate school, I never had any formal training in software engineering. For me, coding was a way to finish my homework, analyze data to answer a question, or turn an idea in my head into something real.

Sometimes this meant familiarizing myself with the details of algorithms or data structures, but I never found myself coding for the sake of coding. I don’t have an opinion on generics (don’t @ me). I think this describes the vast majority of data scientists and machine learning engineers I know. When it comes to choosing tools, we’re often optimizing for usability and efficiency within the context of the problems we want to solve, not software fundamentals.

Fast forward to 2018, and the machine learning and data science community seems to have settled on Python. The syntax is beginner friendly, it’s a great scripting language, and when you’re looking to optimize performance, you can interface with lower level libraries in C.

For me, though, the most appealing part of Python is that it’s a “good enough” language for building entire systems end-to-end. The Scientific computing packages like Numpy, Pandas, Matplotlib, and Jupyter notebooks have huge community support. And when it’s time to build an application around your work, frameworks like Flask and Django are performant enough to scale to hundreds of millions of users. I can build an entire system using a single programming language.

I’ve been satisfied with Python for almost 10 years. But I don’t think I’ll still be using it another decade from now. I think I’ll be using Swift.

At the 2018 TensorFlow Dev Summit, Google’s Chris Lattner announced that TensorFlow would soon be supporting Swift. Don’t mistake Swift for TensorFlow as a simple wrapper around TensorFlow to make it easier to use on iOS devices. It’s much more than that. This project is an attempt to change the default tools used by the entire machine learning and data science ecosystem.

As I was becoming familiar with the Python scientific stack, two other technology trends were slowly percolating in the background: the resurgence of AI through neural networks and deep learning and a shift toward mobile-first applications running on billions of smartphones and IoT devices. Both of these technologies require high-performance computing in ways that Python isn’t quite suited for.

Deep learning is computationally expensive, passing huge data sets through long chains of tensor operations. To perform these calculations quickly, software must be compiled targeting specialized processors with thousands of threads and cores. These problems are exacerbated in the context of mobile devices, where power and heat are real concerns. It’s a challenge to optimize applications for slower, more efficient processors with less memory. Thus far, Python hasn’t offered much help.

This is a bit of problem for data scientists and machine learning researchers. We end up resorting to hack-y workarounds to interface with GPUs and many of us struggle with mobile app development. It’s not impossible to learn a new language, but switching costs are high. To see just how high, look no further than JavaScript projects like Node.js and cross-platform abstractions like React Native.

I’d planned on clinging to my numpy arrays forever, but these days, it’s harder and harder to complete projects without leaving the boundaries of Python. It’s no longer a good-enough solution. The inability of Python to be an end-to-end language in a world dominated by machine learning and edge computing is the motivation behind Swift for TensorFlow.

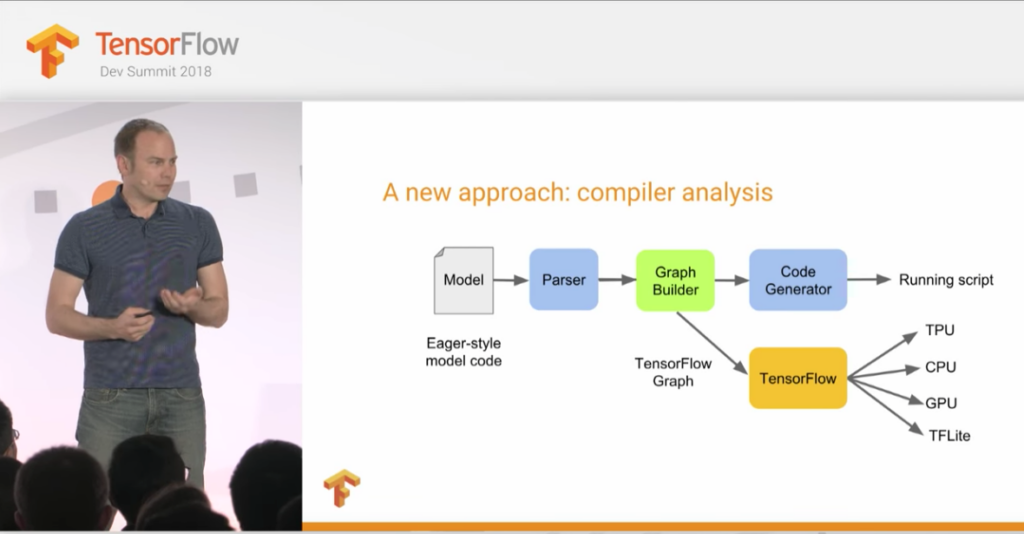

Chris Lattner makes the case that Python, with its dynamic typing and interpreter, can’t take us any further. In his words, engineers need a language that treats machine learning as a “first class citizen”. And while he lays out deeply technical reasons why a new approach to compiler analysis is necessary to change the way programs using TensorFlow are built and executed, the most compelling points of his argument focus on the experience of those doing the programming.

Any wish list of features that a programming language can have that make it easier to *do* machine learning would include:

- Readable, efficient syntax

- Scripting capabilities

- Notebook-like interfaces

- Large, active community building third party libraries

- A clean, automated way of compiling code for specialized hardware from TPUs to mobile chips

- Native execution on mobile

- Performance closer to C

Lattner and his team are checking all of these boxes with Swift for TensorFlow. The syntax is almost as pretty as Python. It has an interpreter for scripting and notebooks. Oh, and it’s fast. On top of that, they are providing the ability to run arbitrary Python code to help migration and because Swift is now the default choice for iOS app development, deploying to mobile is easy.

Under the hood, Swift’s open source compiler and static typing make it possible to target specialized AI chipsets at the build step. As one of the original creators of Swift, Lattner might be biased, but he’s convinced me that he understands the process of doing machine learning.

I’m in.

You can see Chris Lattner’s entire talk here:

Comments 0 Responses