If you’re looking for help in easily adding machine learning to your Android apps, you’ve come to the right place. In this article, we’ll learn how to get started, implementing a task known as text recognition.

What is ML Kit?

ML Kit is a mobile SDK that brings Google’s machine learning expertise to Android & iOS apps with powerful, yet easy-to-use APIs and pre-trained models. Whether you’re new or experienced in machine learning, you can implement this functionality with just a few lines of code.

In this article, we’ll learn how to work with one of the provided models by the SDK: Text Recognition for Android. With this API, you can recognize text in images in any Latin-based language.

Create a Firebase Project and Add to Android

The first step we need to take is to create a Firebase project and add it to our Android app. At this point, I’ve already created a Firebase project, added an Android app to the project, and also included the Google Play Service JSON file. If you’re not familiar with this process, you can check it out the official guide below.

Let’s get Started

The Google Services plugin uses a Google Services JSON file to configure your application to use Firebase. And the ML Kit dependencies allow you to integrate the machine learning SDK into your app.

Add Dependencies

First things first, we need to add a Firebase ml-vision dependency to our Android project in the build.gradle file. To use the text recognition feature, this is the dependency we need:

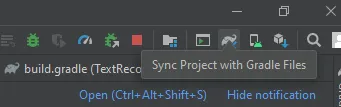

Sync the Project

After successfully adding the dependency, just sync the project, as shown in the screenshot below:

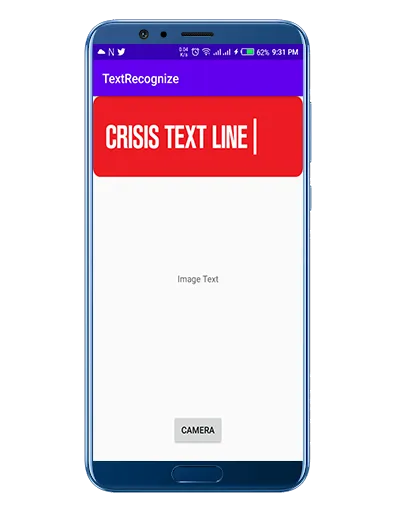

For now, I just created a basic layout with a camera button and an image picker, selected from the camera:

How the SDK Works

Before we start coding the app to enable text recognition, let’s take a closer look at how the SDK works for text recognition.

Here are some key code concepts we’ll be looking at:

- First, we start with a bitmap of the selected image. Then we initialize a FirebaseVisionImage object, passing the bitmap to the constructor.

- A FirebaseVisionImage represents an image object that can be used for both on-device and cloud API detectors. From there, we process the image using FirebaseVisionTextRecognizer, which is the on-device or cloud-based text recognizer.

- After that, we call processImage on the FirebaseVisionTextRecognizer, which passes the FirebaseVisionImage as a parameter. We also use the onSuccessListener to determine when the recognition is complete. If successful, I can access the FirebaseVisionText object.

- We also add an OnFailureListener as well—if image processing fails, we’ll be able to show the user an error.

Now let’s jump into some code to see how these above steps look in practice:

Process the Text Result

In the processTextRecogniation function, we first get a list of blocks from the FirebaseVisionText object. If no text found, we should display a message “No Text found” and then return out from the function.

Next, we need to iterate through the blocks, which we get from the FirebaseVisionText. We then get the line of text from FirebaseVisionText.line and iterate on it.

After getting the lines and iterating, we need to get the element List from the FirebaseVisionText.Element and then iterate the elements to get the frames for the individual words within the image.

Finally, we append the text from the element into the TextView.

Here’s a look at the code for this process:

Result

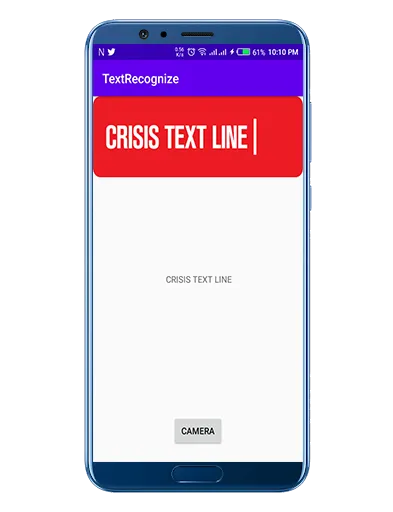

Let’s build and run the application to see our text recognizer in practice:

Wow! Look at that. We’ve successfully added a text recognition feature to our Android application.

Tips to improve real-time performance

Before we conclude, I wanted to also address a few ways you might improve the performance of the text recognizer:

- If you’re using the camera API, then make sure to capture the images in the format of ImageFormat.NV21.

- If you’re using the camera2 API, then make sure to capture the images in the format of ImageFormat.YUV_420_888.

- Keep in mind to capturing the images at low resolution.

Conclusion

This article taught you how to recognize text from images on Android using Firebase’s ML Kit. To do this, we learned how to create a FirebaseVisionImage object and how to process the selected image.

We also created an application and added camera functionality. After capturing the text-based image using Vision, we fetched the text and showed it in the TextView.

Text recognition has a wide variety of real-world use cases. On such use case is automating data entry for receipts, business cards, credit cards, and much more.

I hope this article is helpful. If you think something is missing, have questions, or would like to offer any thoughts or suggestions, go ahead and leave a comment below. I’d appreciate the feedback.

I’ve written some other Android-related content, and if you liked what you read here, you’ll probably also enjoy these:

Sharing (knowledge) is caring 😊 Thanks for reading this article. Be sure to clap or recommend this article if you found it helpful. It means a lot to me.

If you need any help then Join me on Twitter, LinkedIn, GitHub, and Facebook.

Comments 0 Responses