While looking to try my hand at using Apple’s Core ML framework for on-device machine learning, I stumbled upon a lot of tutorials. After having tried a few, I decided to come up with my very own 😛

Turns out, it was a good exercise! In this tutorial, I’ll walk you through the development of an app that uses a pre-trained Core ML model to detect a person’s age from an input image. So here we go.

Pre requisites:

1. MacOS (Sierra 10.12 or above)

2. Xcode 9 or above

3. Device with iOS 11 or above. (Good news — The app can run on a simulator as well!)

Now, follow the steps below to start your Core ML quest:

Create a Xcode Project

To begin with, create a new Xcode project — Single Page Application—and name it anything under the sun.

Setup to Add an Input Image

We need a setup to pick photos from our library to feed the model as an input for age prediction. Instead of giving you a link to a ready-made setup, I’ll quickly walk you through it (Disclaimer: Pictures will speak louder than my words for this setup):

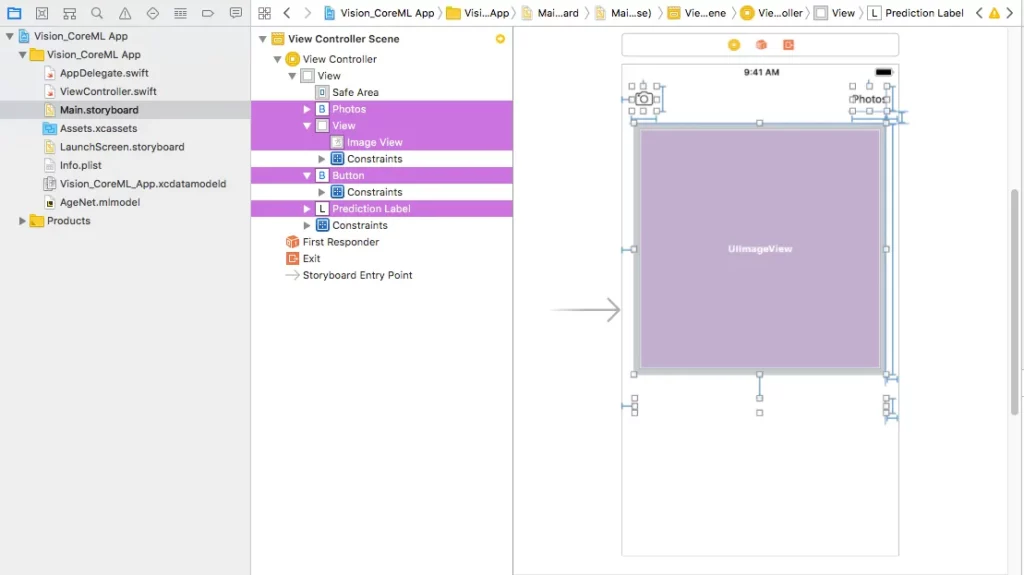

Let’s start with the UI. Jump to your Main.storyboard. On your current view, drag the following components:

Drag two UIButtons: Camera and Photos — These will help you input an image, either with phone camera or photos library respectively

UIImage — This will display your input image

UILabel — This will display the predicted age

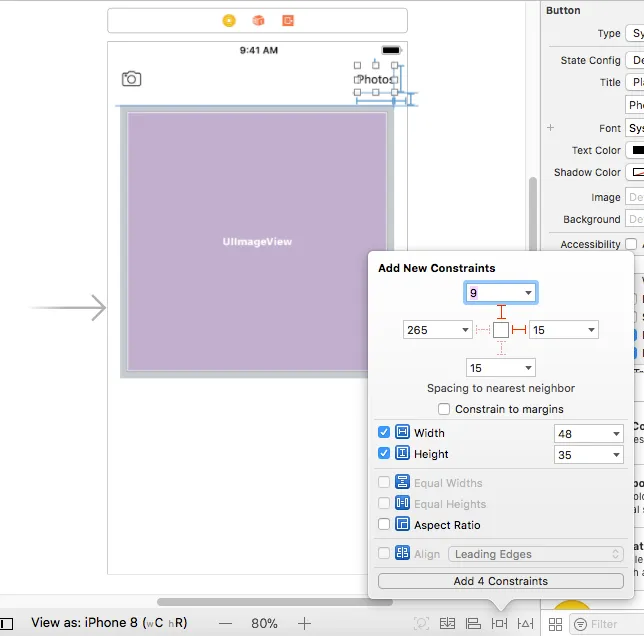

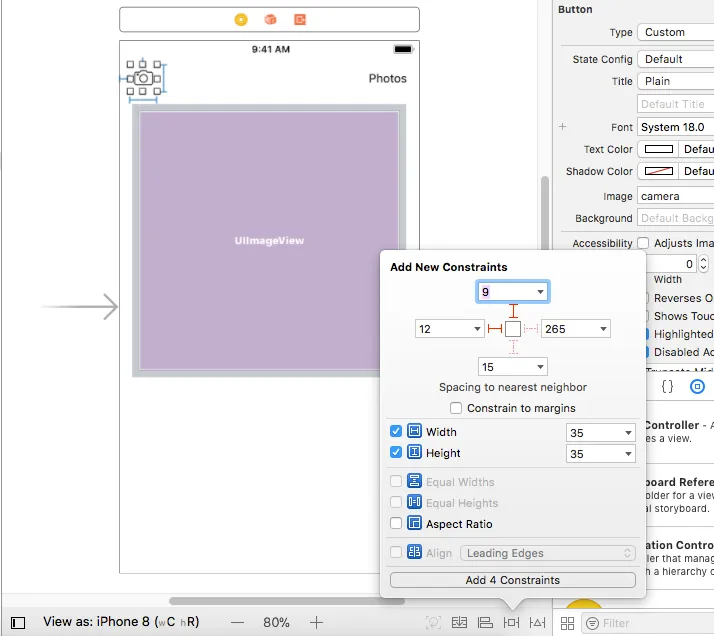

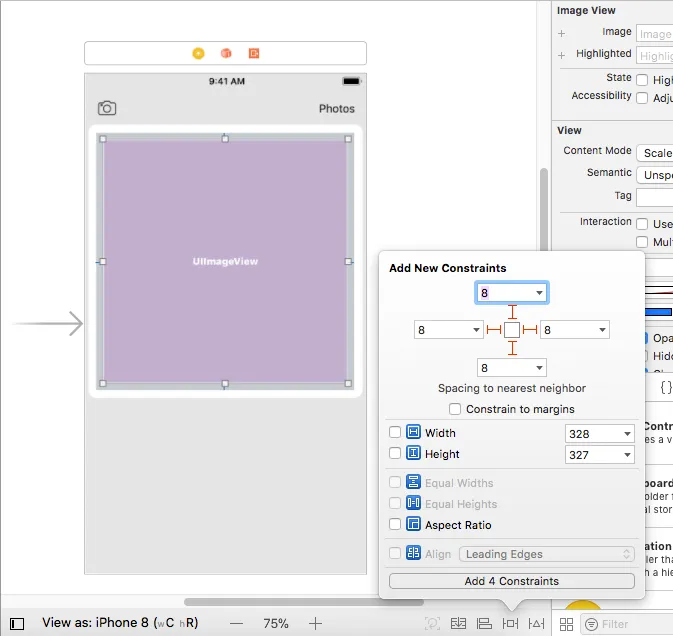

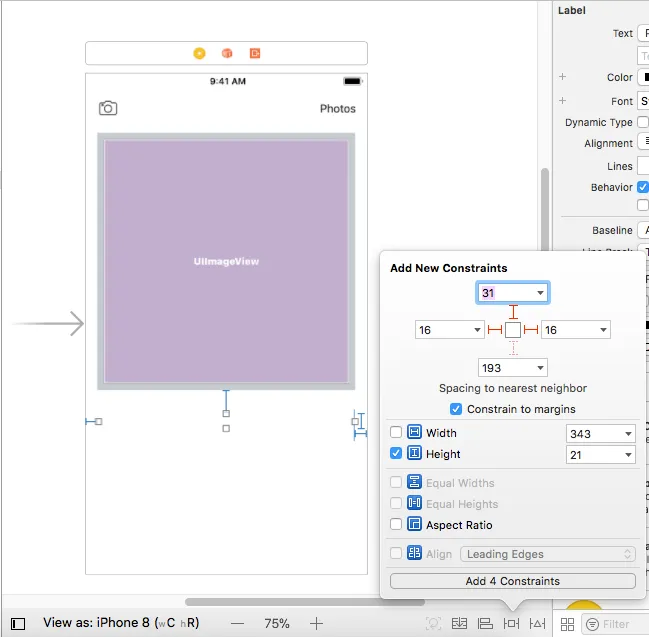

2. Now quickly add constraints to each item on your view. See the pictures below for the constraints setting.

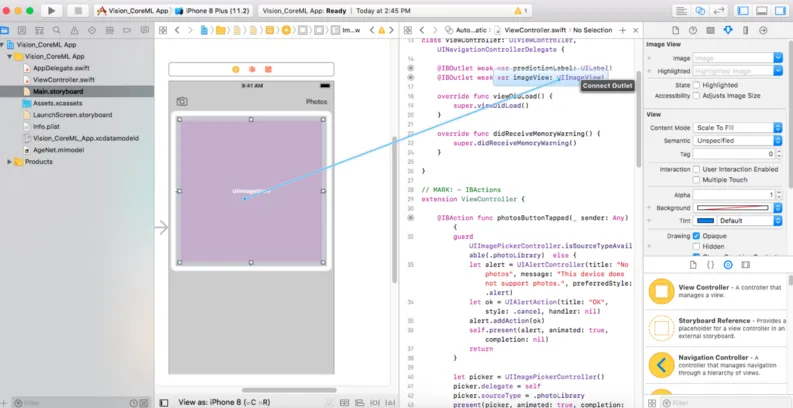

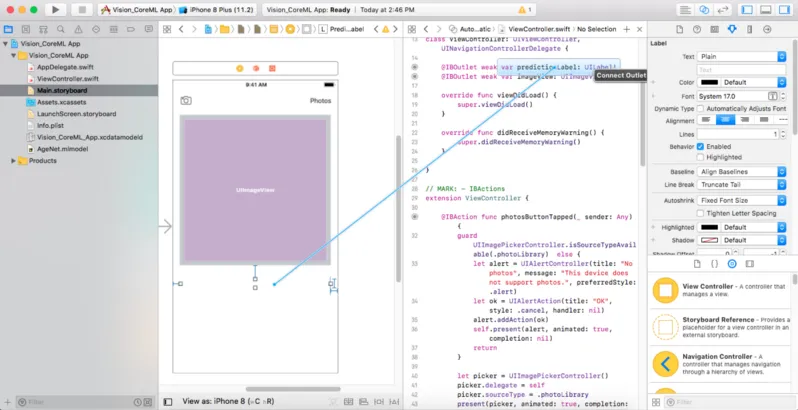

3. Click on your assistant editor and draw outlets as follows:

UIImage outlet for the our image view

UILabel outlet for the label.

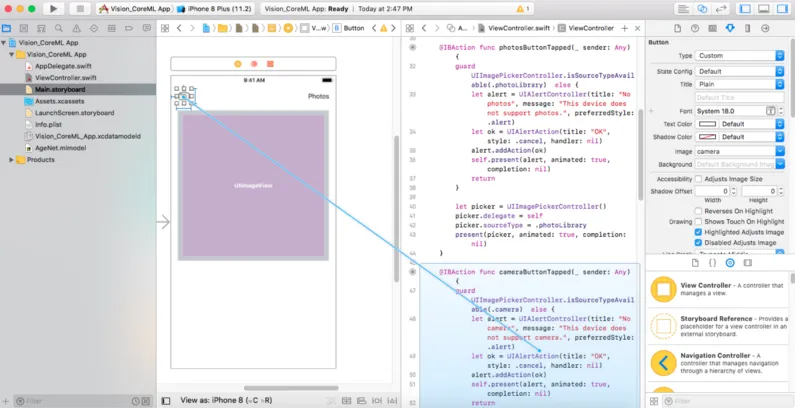

IBAction outlet for each UIbutton

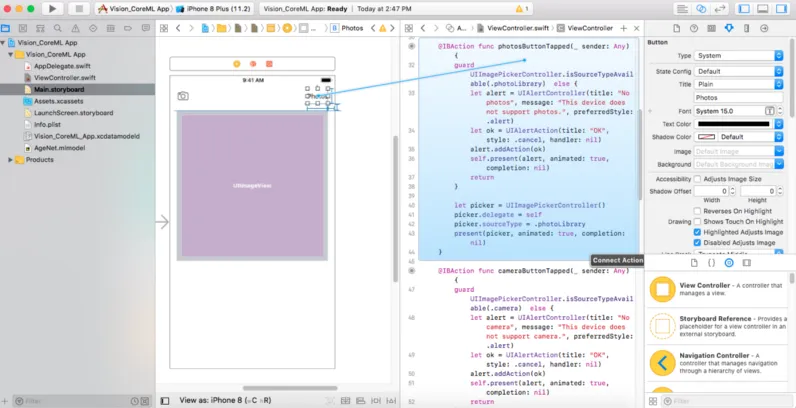

4. The following code will go in the IBAction outlets for Photos and Camera buttons. This is done to present the picker for photos/camera. If it’s unable to present the picker, it’ll show an appropriate alert for the same:

For the Photos button:

@IBAction func photosButtonTapped(_ sender: Any) {

guard UIImagePickerController.isSourceTypeAvailable(.photoLibrary) else {

let alert = UIAlertController(title: "No photos", message: "This device does not support photos.", preferredStyle: .alert)

let ok = UIAlertAction(title: "OK", style: .cancel, handler: nil)

alert.addAction(ok)

self.present(alert, animated: true, completion: nil)

return

}

let picker = UIImagePickerController()

picker.delegate = self

picker.sourceType = .photoLibrary

present(picker, animated: true, completion: nil)

}For the Camera button:

@IBAction func cameraButtonTapped(_ sender: Any) {

guard UIImagePickerController.isSourceTypeAvailable(.camera) else {

let alert = UIAlertController(title: "No camera", message: "This device does not support camera.", preferredStyle: .alert)

let ok = UIAlertAction(title: "OK", style: .cancel, handler: nil)

alert.addAction(ok)

self.present(alert, animated: true, completion: nil)

return

}

let picker = UIImagePickerController()

picker.delegate = self

picker.sourceType = .camera

picker.cameraCaptureMode = .photo

present(picker, animated: true, completion: nil)

}5. Next, as we are using the UIImagePickerController(), we need to extend our class with UINavigationControllerDelegate and UIImagePickerControllerDelegate.

class ViewController: UIViewController, UINavigationControllerDelegate, UIImagePickerControllerDelegate {

...

...

...

}6. Now we need to use the didFinishPickingMediaWithInfo delegate method to set the image picked from the camera or photos library to the image view on our UI. Use the following code to do this:

func imagePickerController(_ picker: UIImagePickerController, didFinishPickingMediaWithInfo info: [String : Any]) {

// Dismiss the picker controller

dismiss(animated: true)

// guard unwrap the image picked

guard let image = info[UIImagePickerControllerOriginalImage] as? UIImage else {

fatalError("couldn't load image")

}

// Set the picked image to the UIImageView - imageView

imageView.image = image

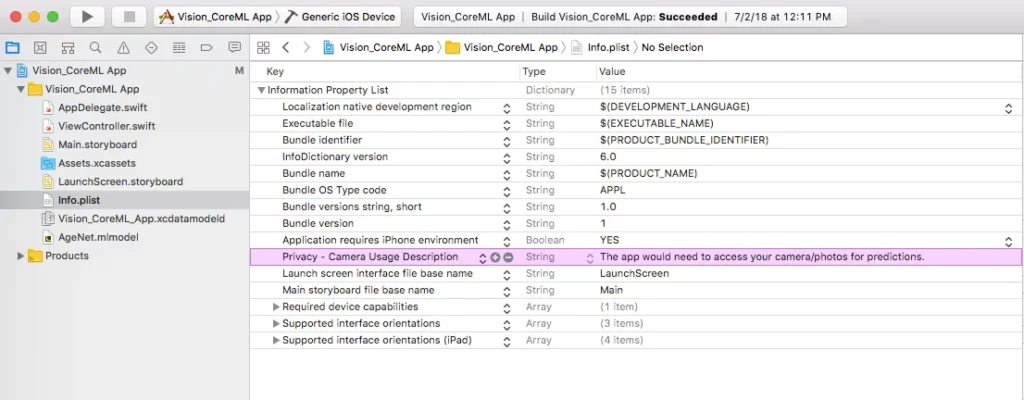

}7. Now move to Info.plist and add a key — Privacy — Camera Usage Description and add Value — The app needs to access your camera/photos for predictions.

Congratulations! You’ve completed the initial setup. Run your app, and you should have something that lets you take pictures from the phone’s camera or the device’s photo library and display them in the image view on the screen.

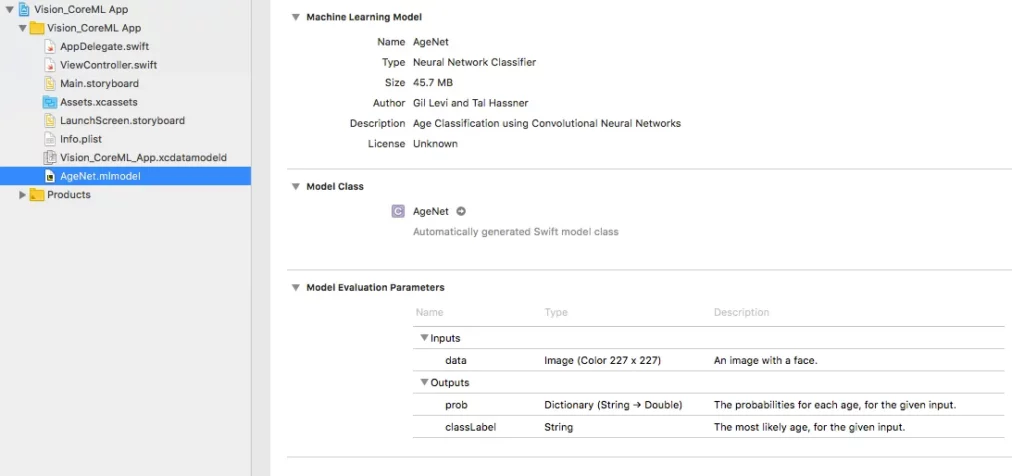

Add a Core ML model to the project

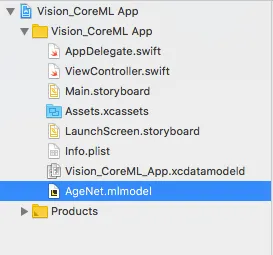

- Once the setup is done, you need to add a Core ML model to your project. For this app, I used AgeNet. You can download it here.

- Once you’ve downloaded the model, you need to add it to your project. All hail drag-and-drop! Drag and drop the downloaded model into the Xcode project structure.

3. Now analyze (aka ‘click on’) this model to see the input and output parameters and other model information.

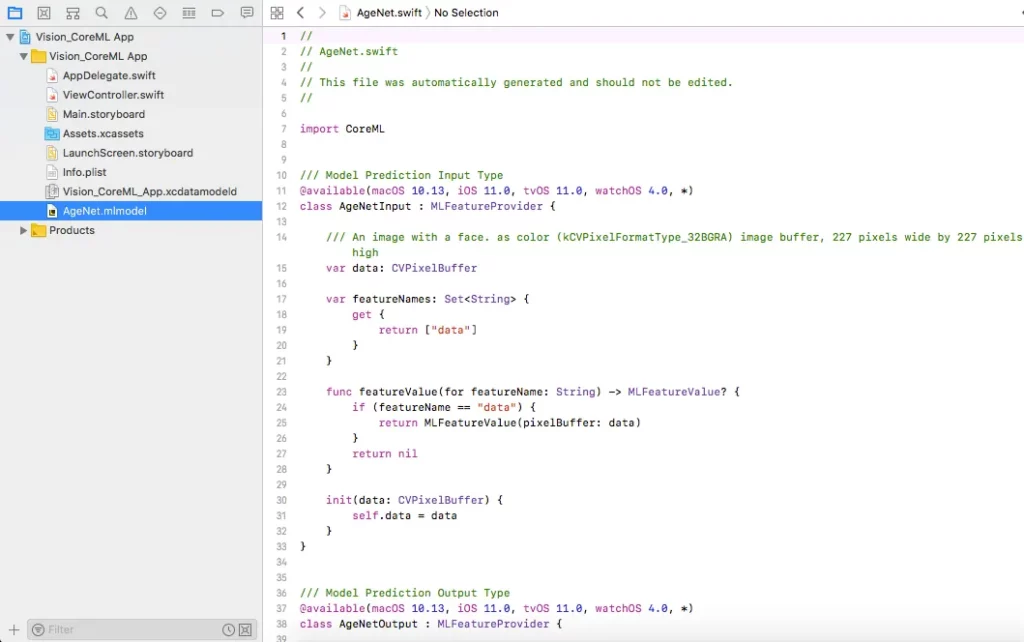

Additionally, you can click the arrow in front of the AgeNet label in the Model Class section to open the model class, which is automagically generated by Xcode for your Core ML model.

Make Predictions!

- Now we need to give the expected input to the model to get the expected prediction as output from the model. For this, you’ll have to import Core ML and the Vision framework into your ViewController.Swift file.

Add import statements at the top as follows:

import CoreML

import Vision2. Add a detectAge() function into the file. What’s happening in this function is that your AgeNet model is initialized to be passed to a request which takes an image as input. The request’s completion handler is used to retrieve and print the prediction’s result.

func detectAge(image: CIImage) {

predictionLabel.text = "Detecting age…"

// Load the ML model through its generated class

guard let model = try? VNCoreMLModel(for: AgeNet().model) else {

fatalError("can't load AgeNet model")

}

// Create request for Vision Core ML model loaded

let request = VNCoreMLRequest(model: model) { [weak self] request, error in

guard let results = request.results as? [VNClassificationObservation],

let topResult = results.first else {

fatalError("unexpected result type from VNCoreMLRequest")

}

}

// Update the UI on main queue

DispatchQueue.main.async { [weak self] in

self?.predictionLabel.text = "I think your age is (topResult.identifier) years!"

}

// Run the Core ML AgeNet classifier on global dispatch queue

let handler = VNImageRequestHandler(ciImage: image)

DispatchQueue.global(qos: .userInteractive).async {

do {

try handler.perform([request])

} catch {

print(error)

}

}

}4. So far so good! Now the last thing you need to do is call the detectAge() function when your image pickers finish picking the images from the camera or photos gallery. So add the method call in the didFinishPickingMediaWithInfo delegate method added earlier. The delegate method should look like this :

func imagePickerController(_ picker: UIImagePickerController, didFinishPickingMediaWithInfo info: [String : Any]) {

// Dismiss the picker controller

dismiss(animated: true)

// guard unwrap the image picked

guard let image = info[UIImagePickerControllerOriginalImage] as? UIImage else {

fatalError("couldn't load image")

}

// Set the picked image to the UIImageView - imageView

imageView.image = image

// Convert UIImage to CIImage to pass to the image request handler

guard let ciImage = CIImage(image: image) else {

fatalError("couldn't convert UIImage to CIImage")

}

detectAge(image: ciImage)

}And that’s it! You’re good to go! Run the project and get ready to detect the ages of people around you. You can use the camera or select photos from your photos library.

You can find the entire project here.

Here’s a sample demo for the app:

Discuss this post on Hacker News and Reddit

Comments 0 Responses