Mobile machine learning, relatively speaking, is still in its infancy. While I fully envision a future where hundreds of thousands of mobile apps will seamlessly leverage ML to provide more personalized, responsive, and immersive user experiences, I also recognize that we all have to start somewhere.

Whether you’re a mobile dev who wants to add ML to your toolkit, a hobbyist looking for a new technology to tinker with, or a product manager trying to get a sense of how mobile ML might work within your roadmap, there are some easy ways to jump right in and experiment.

In the spirit of this journey, here’s a first step. This is a collection of some of the most common and accessible demo / proof-of-concept projects using mobile machine learning. I’ve attempted to organize these starter projects by task and provide code repos, tutorials, and more where possible.

Image Recognition

Image recognition is a computer vision technique that allows machines to interpret and categorize what they “see” in images or videos. Often referred to as “image classification” or “image labeling”, this core task is a foundational component in solving many computer vision-based machine learning problems.

Image recognition models are trained to take an image as input and output one or more labels describing the image. The set of possible output labels are referred to as target classes. Along with a predicted class, image recognition models may also output a confidence score related to how certain the model is that an image belongs to a class.

(1) Not Hotdog

Made famous (or perhaps infamous) by the HBO show Silicon Valley, the “Not Hotdog” demo app is fairly simple—point the phone’s camera at an object, and an image recognition model will tell you whether or not the object is a hotdog. A simple (and fun) way to play with image recognition.

Code:

Tutorials:

Demo:

(2) Skin Disease Recognition/Classification

Another burgeoning use case for on-device image recognition comes in the form of recognizing and classifying skin conditions (cancer, acne, other lesions, etc.). Depending on the specific dataset and use case, these apps can help determine whether or not an individual has (or doesn’t have) a particular skin condition(s).

Code:

Tutorials:

(3) Handwritten Digit Classification

Leveraging the famous MNIST dataset is another popular way to experiment with on-device machine learning. This dataset contains handwritten digits, and the demo apps that use it are able to classify digits 0–9 in real-time.

Code:

Tutorials:

Demo:

(4) Gesture/Emotion Recognition

We’ve also recently seen a surge of demo projects that look to classify gestures, facial expressions, and more—some to identify a certain emotion, some to communicate a message, and others that combine or otherwise employ this kind of recognition.

Code:

Tutorials:

Demo:

Object Detection

Object detection is a computer vision technique that allows us to identify and locate objects in an image or video. With this kind of identification and localization, object detection can be used to count objects in a scene and determine and track their precise locations, all while accurately labeling them.

(1) Object Detection for Individuals with Visual Impairments

In a testament to the desire of the engineering community to build applications that support higher degrees of accessibility, we’ve seen numerous proof-of-concept demos that use object detection to recognize, locate, and track objects in the real world. These apps are generally designed to leverage object detection to trigger non-visual warnings to users about impediments, moving objects, etc.

Code:

(2) Pet Monitoring

Because object detection helps us both identify AND track objects, it’s also an ideal solution for apps that monitor your pets while you’re not home or nearby. An app that leverages object detection in this way could send alerts to users (or trigger other events) if their cat, dog, iguana, or other beloved pet enters a no-go zone (i.e. hops on a clean counter, gets into the garbage, etc.).

Code:

Tutorials:

(3) Other

There are many other on-device object detection use cases we could cover, but below are a couple projects I found particularly fun and engaging:

Solving Word Search Puzzles:

Hand Detection (Object Detection + Augmented Reality):

Image Segmentation

Image segmentation is a computer vision technique used to understand what is in a given image at a pixel level. It is different than image recognition, which assigns one or more labels to an entire image; and object detection, which localizes objects within an image by drawing a bounding box around them. Image segmentation provides more fine-grain information about the contents of an image.

(1) Portrait Mode

Though portrait modes are baked into flagship devices, many older smartphone generations can only achieve this affect through a segmentation model—create a pixel-level mask of the foreground (i.e. person, object, elements of a scene), and blur the background to create a depth-of-field effect.

Code:

Tutorials:

Demo:

(2) Green Screen Effect / Background Replacement

Setting up a full green screen rig could cost thousands of dollars and lots of space. But with on-device image segmentation, we can isolate backgrounds in images and video feeds, and overlay graphics, AR objects, and more, allowing for a virtual green screen effect.

Code:

Tutorials:

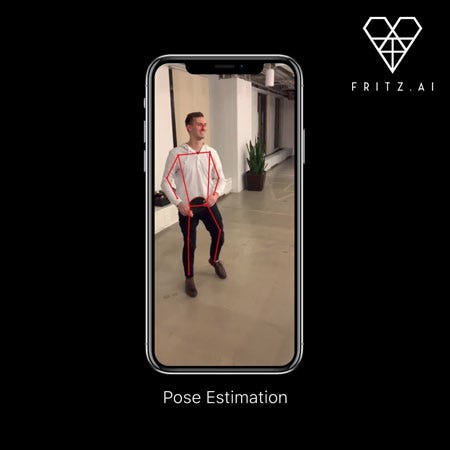

Pose Estimation

Pose estimation is a computer vision task that infers the pose of a person or object in an mage or video. We can also think of pose estimation as the problem of determining the position and orientation of a camera relative to a given person or object.

This is typically done by identifying, locating, and tracking a number of keypoints on a given object or person. For objects, this could be corners or other significant features. And for humans, these keypoints represent major joints like an elbow or knee.

Human Movement Tracking

Nailing down common use cases or demo projects for pose estimation can be a bit more difficult, as its power lies in how it can augment very specific tasks—monitoring exercise routines, enabling AR overlays, and more. The fundamental use case of tracking specific human movements can take many different forms. So for these demos, we’ll simply focus on high-quality implementations of pose estimation in a more broad sense for tracking human movement.

Code:

Tutorials:

Natural Language Processing Use Cases

The above use cases (all computer vision) are just the tip of the iceberg. There are also a number of natural language processing (NLP) use cases that developers can easily and quickly experiment with. These ML tasks help us classify and better understand text.

(1) Sentiment Analysis

Sentiment analysis allows us to understand and classify the positivity, negativity, or neutrality of a given text.

Code:

Tutorials:

(2) Translation

Language translation can now reliably take place on-device, and is made even easier with Google’s pre-built API (both cloud and on-device flavors) via ML Kit, their mobile machine learning framework.

Code:

Tutorials:

Tools to Start Building

These code examples and tutorials should give you a pretty good starting point, but here are a few specific tools that can help you successfully build these (and other) demo projects.

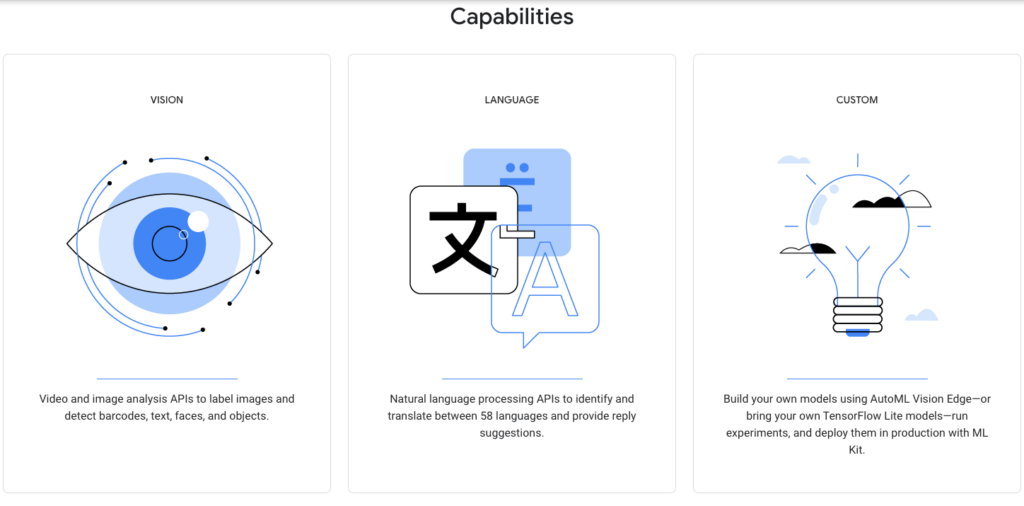

ML Kit (Google)

ML Kit is Google’s cross-platform framework and collection of APIs for mobile machine learning—both on-device, and via the cloud.

Here are the pre-trained APIs available:

- Vision: Barcode Scanning, Face Detection, Image Labeling, Landmark Detection, Object Detection and Tracking, Text Recognition

- Language: Language ID, Translation (both on-device and cloud-based), Smart Reply

- Custom: AutoML Vision Edge is Google’s platform for developing custom, mobile-ready models (also can be used for other edge devices).

TensorFlow Lite Models

TensorFlow Lite, TensorFlow’s lightweight model framework for on-device ML, includes a number of pre-trained models that developers can easily download and drop into their apps. Though technically cross-platform, these models don’t tend to work as well as models built with Apple’s dedicated framework for iOS (Core ML — see below).

Here are the pre-trained models available: Image labeling, object detection, pose estimation, segmentation, style transfer, smart reply, text classification, question answering.

Core ML Model Zoo

While these models don’t come directly from the Core ML team, Apple has collected a wide range of community-built Core ML models that you can experiment with in your projects.

Models available: image labeling/classification, depth estimation, object detection, image segmentation, pose estimation, and question answering.

Additionally, here’s an “awesome” list of Core ML models on GitHub:

Other Resources

There are so many possible resources to share, but here are a couple additional ones we’ve put together that will hopefully inspire you even further.

- Awesome-Mobile-Machine-Learning: Our own “Awesome” list of all kinds of resources for mobile and edge ML. Tutorials, code, frameworks, publications to follow, and much more.

And this is where we also will leave you, for now. We’d love to hear about your results when building these or any other projects. Drop us a note in the comments, or tag us on Twitter (@fritzlabs)with a GIF or short video of your demo, and we just might share it 🙂

Comments 0 Responses