Humans have been trying to augment their reality since the 1990s, when Boeing developed their own AR for assembling their airplanes. The technology gained popularity with the launch of the first version of Google Glass. However, AR has been widely adopted in the consumer market only in the past 5 years, as handheld devices have become powerful enough to host such experiences.

AR has plenty of professional and industry-level applications, ranging from virtual shopping environments to preparation for space exploration; however, most people currently use it in the form of face filters and camera effects for editing photos and videos.

Additionally, AR games have recently gained popularity—a well-known and popular example being Pokemon GO. Many games, such as Minecraft, are planning to release AR-compatible versions via Apple’s AR platform.

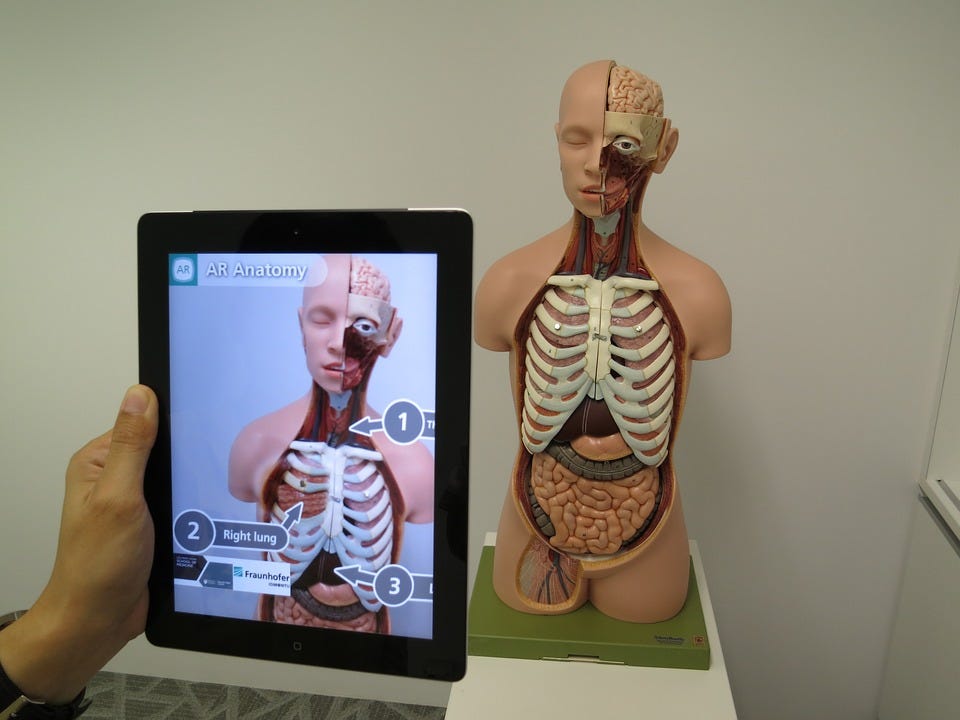

Medical sciences can also benefit from AR, as doctors can be guided by augmented projections over the patient. When combined with AI algorithms while performing surgeries, there’s the distinct possibility of increased accuracy and efficiency for various procedures and diagnoses.

Education is another field that could be brought to life with virtual animated 3D models or demonstrations popping out of books, making studies much more fun and immersive by using AR. This could introduce a new era of interactive learning, which could greatly benefit schools and educational institutional institutes that cannot afford real 3D models.

However, the dream of an augmented world cannot be achieved without the amazing efforts of fellow artists and developers. Let’s dive into the process of developing augmented reality experiences.

AR Development

To reach its full potential, AR is, in many cases, dependent on machine learning. This is because ML helps to track flat surfaces or faces in video feeds onto which AR images and objects can be superimposed or projected. ML also allows us to track facial movement, differentiate between backgrounds and humans, and much more.

Even though machine learning is involved, it’s abstracted away by most AR development tools and frameworks, so developers only need to focus on the projections and not the tracking.

The hardware involved in AR

Before we jump into development tools and platforms, it’s important to briefly address the hardware on which AR experiences are being deployed. Smartphones and VR headsets and the primary devices currently using AR. While mobile is the more popular and consumer-friendly option, VR headsets offer a truly immersive AR experience

Some of the leading VR Headsets include Microsoft’s Hololens, Facebook’s Oculus, and the HTC Vive.

To bring AR experiences to these devices, in the past few years, Google and Apple have been actively developing AR tools and frameworks for their respective mobile platforms that is Android and IOS.

But before we jump into what Apple and Google are up to, it’s worth taking a look at some of the most important publishing/content platforms for AR. Snapchat, Instagram, and Facebook are the largest platforms on mobile for AR camera filters. Let’s take a look at how you can develop and publish on these platforms.

Snapchat

Snapchat has been leading in terms of innovation with AR, providing users with everything from multiplayer AR games to pet filters.

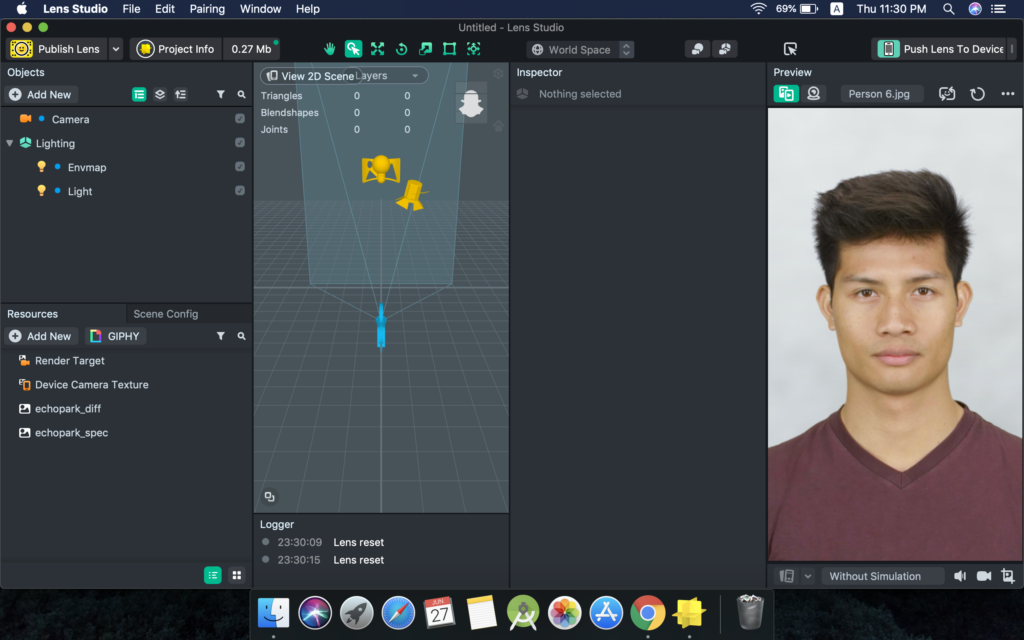

Snapchat started allowing third-party creators to publish lenses (their term for filters) in 2017 when it launched its lens creation tool called Lens Studio. Since then, more than 400,000 lenses have been created and have been used more than 15 billion times.

The latest version (v2.0) of Lens Studio allows anyone to create and publish lenses with ease. The platform is very user-friendly and one can even develop a fully-functional filter within seconds, without writing a line of code.

The Lens Studio documentation is awesome and super easy to understand. Almost all filters are created using sample templates provided by the Snapchat team, which can be customized by simply dragging and dropping objects and images on the screen and toggling their properties.

Advanced functionality and different interactions can be achieved using scripts written in JavaScript.

Lenses can be tested before publishing in the Snapchat app itself via the Lens Studio “push to device” option.

Snapchat filters by developers can be unlocked for 48 hours by scanning the snap code(QR code) of the Lens or by using a direct link.

Instagram & Facebook

The Facebook family of apps lags behind Snapchat in innovation; however, they are far ahead in approachability. Instagram & Facebook together feature a user base of over 3 billion people.

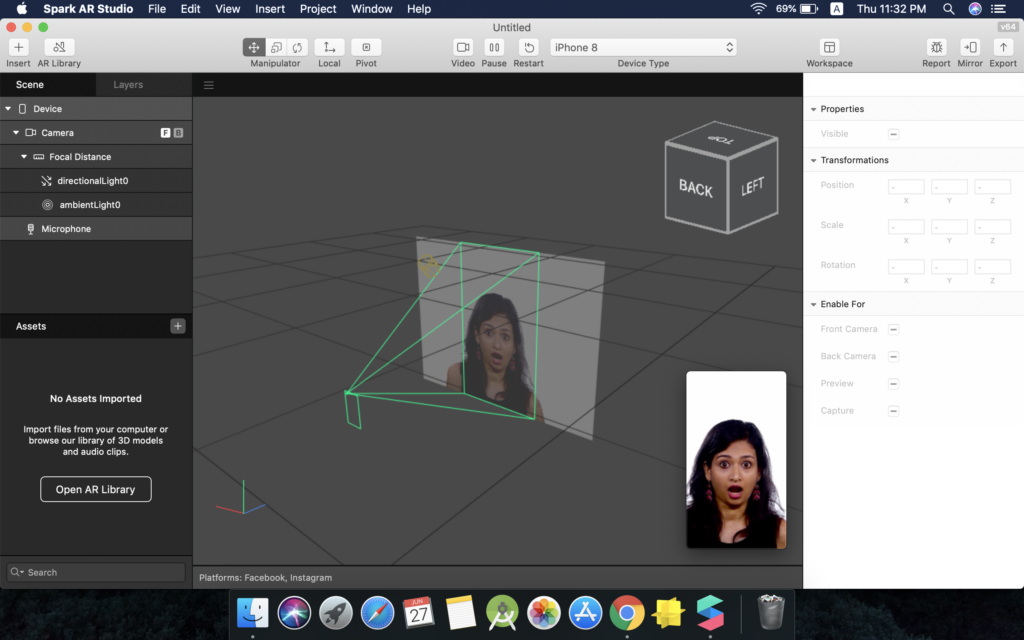

The tool used for creating and publishing on these platforms is Spark AR Studio.

At the F8 developer conference this year, Facebook announced that more than 1 billion unique people have used filters created using Spark AR studio in the last year alone.

Filters on Facebook can be published by any creator; however, only individuals accepted into Instagram’s closed beta program can publish on this popular social media platform.

Instagram is still testing third-party filters on its platform, looking for bugs and improving performance on a variety of devices. They plan to open the beta program in summer 2019.

Spark AR Studio has a steeper learning curve than Lens Studio, and the best way to learn it is by practicing. The documentation is easy to understand and has video tutorials and sample projects for various key concepts. The best place to find help when stuck is the amazing community in the Spark AR Facebook group.

Spark AR Studio features a drag-and-drop interface, and unlike Lens Studio, consists of a visual programming interface called the Patch Editor. The Patch Editor makes it easy for non-programmers to implement advanced interactions. It also has a JavaScript scripting interface in case you want to use that instead of the Patch editor (or if you want to code your own custom shaders).

Spark AR also has a dedicated app for both Android and IOS, which can be used to test your effects/filters prior to publishing.

Instagram and Facebook have a unique way of accessing filters by third party creators. In order to permanently see a creator’s filter in your IG or FB camera, you have to follow them. This, in turn, helps the creator gain followers and stay motivated to create more filters.

Creators can also share their effects via direct links. Example: One of my own filters called ‘Lightning Eyes’ that transforms you into the God of Thunder.

You can also follow me on Instagram @harshhc5 to get all my filters in your IG camera.

Augmented Reality for Mobile App developers

Lens Studio and Spark AR Studio are great tools for AR; however, they only allow you to create augmented experiences for their target platforms (Snapchat, Instagram, and Facebook).

If you wish to create your own native mobile apps using AR, you must use one of the following popular tools.

Apple’s ARKit

ARKit is a powerful framework that consists of tools like Reality Composer and RealityKit to develop AR experiences without knowledge of 3D modeling for Apple’s products. There are plenty of apps and games available on the app store already using ARKit.

Apple launched the third generation of ARKit at WWDC this year, introducing features like real-time body tracking, people occlusion, full body motion capture and more.

ARKit consists of plenty of tools required to develop AR experiences, all of which are covered well in their excellent documentation.

Here are a few tutorials that will help you learn to use ARKit to build your own AR Applications:

- Hand Detection with Core ML and ARKit

- Using ARKit on iOS to Build an Augmented Reality Shooter Game

- Using Core ML and ARKit to Build a Gesture-Based Interface iOS App

Google’s ARCore

ARCore offers a number of APIs for motion tracking, plane tracking, environment lighting, object tracking, detecting user interaction, and more for supported Android and IOS devices. Some of its APIs also support Unity and Unreal game engine platforms.

Plenty of apps and tools developed using ARCore and Tilt Brush (Google’s 3D drawing tools) can be found here.

Here is an awesome tutorial about creating an Android App using ARCore by Calum Gathergood:

Unity for Mobile AR

Unity is a famous 3D game engine that has a great visual interface for creating augmented experiences that rely on ARKit and ARCore to run on mobile devices. It’s also one of the best platforms for developing AR and VR games.

Here’s a great tutorial by Shivang Chopra on drawing lines in 3D space using Unity:

Conclusion

Augmented Reality is a new digital trend of our generation and is only going to get more popular in the foreseeable future. Imagine, as one example, being able to shoot entire sci-fi movies using AR technology, without the requirement of building physical sets and wearing expensive makeup and costumes.

Various brands are looking for AR developers to advertise their products in unique ways. It’s definitely a great time to add AR to your resume.

This post offered insight into the world of AR and its potential in the future. The next few posts are going to teach you how to develop and publish different types of AR camera filters on Snapchat, Instagram, and Facebook.

Comments 0 Responses